[ad_1]

Within the AI world, there is a buzz within the air a couple of new AI language mannequin launched Tuesday by Meta: Llama 3.1 405B. The rationale? It is doubtlessly the primary time anybody can obtain a GPT-4-class giant language mannequin (LLM) without spending a dime and run it on their very own {hardware}. You may nonetheless want some beefy {hardware}: Meta says it might run on a “single server node,” which is not desktop PC-grade gear. Nevertheless it’s a provocative shot throughout the bow of “closed” AI mannequin distributors akin to OpenAI and Anthropic.

“Llama 3.1 405B is the primary brazenly out there mannequin that rivals the highest AI fashions in terms of state-of-the-art capabilities typically data, steerability, math, device use, and multilingual translation,” says Meta. Firm CEO Mark Zuckerberg calls 405B “the primary frontier-level open supply AI mannequin.”

Within the AI trade, “frontier mannequin” is a time period for an AI system designed to push the boundaries of present capabilities. On this case, Meta is positioning 405B among the many likes of the trade’s prime AI fashions, akin to OpenAI’s GPT-4o, Claude’s 3.5 Sonnet, and Google Gemini 1.5 Pro.

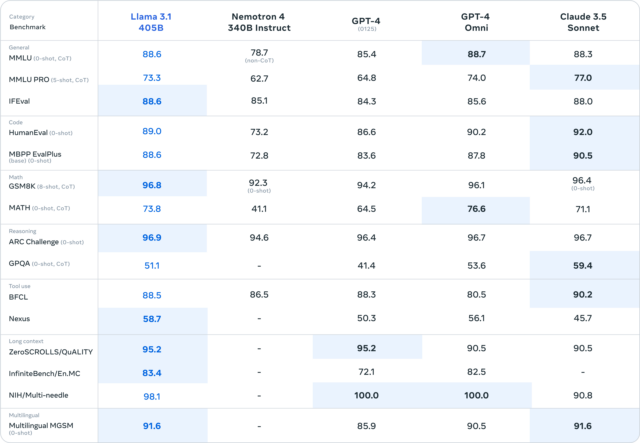

A chart printed by Meta means that 405B will get very near matching the efficiency of GPT-4 Turbo, GPT-4o, and Claude 3.5 Sonnet in benchmarks like MMLU (undergraduate stage data), GSM8K (grade college math), and HumanEval (coding).

However as we have famous many instances since March, these benchmarks aren’t essentially scientifically sound and do not convey the subjective expertise of interacting with AI language fashions. In truth, this conventional slate of AI benchmarks is so typically ineffective to laypeople that even Meta’s PR division simply posted a couple of pictures of numerical charts with out making an attempt clarify their significance in any element.

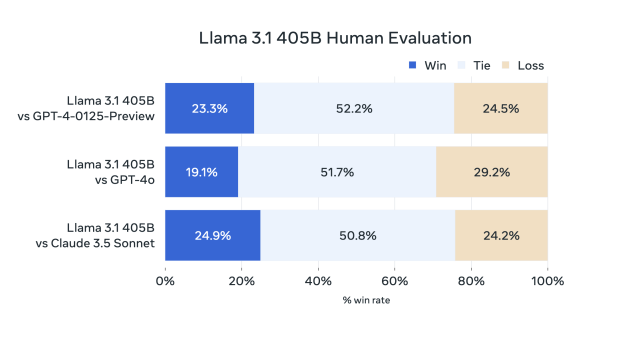

We have as an alternative discovered that measuring the subjective expertise of utilizing a conversational AI mannequin (via what is perhaps referred to as “vibemarking”) on A/B leaderboards like Chatbot Arena is a greater strategy to choose new LLMs. Within the absence of Chatbot Area knowledge, Meta has offered the outcomes of its personal human evaluations of 405B’s outputs that appear to point out Meta’s new mannequin holding its personal in opposition to GPT-4 Turbo and Claude 3.5 Sonnet.

Regardless of the benchmarks, early phrase on the road (after the mannequin leaked on 4chan yesterday) appears to match the declare that 405B is roughly equal to GPT-4. It took loads of costly laptop coaching time to get there—and cash, of which the social media big has a lot to burn. Meta skilled the 405B mannequin on over 15 trillion tokens of coaching knowledge scraped from the web (then parsed, filtered, and annotated by Llama 2), utilizing greater than 16,000 H100 GPUs.

So what’s with the 405B title? On this case, “405B” means 405 billion parameters, and parameters are numerical values that retailer skilled data in a neural community. Extra parameters translate to a bigger neural community powering the AI mannequin, which typically (however not at all times) means extra functionality, akin to higher skill to make contextual connections between ideas. However larger-parameter fashions have a tradeoff in needing extra computing energy (AKA “compute”) to run.

We have been anticipating the discharge of a 400 billion-plus parameter mannequin of the Llama 3 household since Meta gave word that it was coaching one in April, and as we speak’s announcement is not simply in regards to the greatest member of the Llama 3 household: There’s a completely new iteration of improved Llama fashions with the designation “Llama 3.1.” That features upgraded variations of its smaller 8B and 70B fashions, which now function multilingual help and an prolonged context size of 128,000 tokens (the “context size” is roughly the working reminiscence capability of the mannequin, and “tokens” are chunks of knowledge utilized by LLMs to course of data).

Meta says that 405B is helpful for long-form textual content summarization, multilingual conversational brokers, and coding assistants and for creating synthetic knowledge used to coach future AI language fashions. Notably, that final use-case—permitting builders to make use of outputs from Llama fashions to enhance different AI fashions—is now formally supported by Meta’s Llama 3.1 license for the primary time.

Abusing the time period “open supply”

Llama 3.1 405B is an open-weights mannequin, which suggests anybody can obtain the skilled neural community information and run them or fine-tune them. That instantly challenges a enterprise mannequin the place corporations like OpenAI maintain the weights to themselves and as an alternative monetize the mannequin via subscription wrappers like ChatGPT or cost for entry by the token via an API.

Combating the “closed” AI mannequin is a giant deal to Mark Zuckerberg, who concurrently launched a 2,300-word manifesto as we speak on why the corporate believes in open releases of AI fashions, titled, “Open Supply AI Is the Path Ahead.” Extra on the terminology in a minute. However briefly, he writes in regards to the want for customizable AI fashions that provide consumer management and encourage higher knowledge safety, greater cost-efficiency, and higher future-proofing, versus vendor-locked options.

All that sounds affordable, however disrupting your rivals utilizing a mannequin sponsored by a social media conflict chest can be an environment friendly strategy to play spoiler in a market the place you won’t at all times win with essentially the most cutting-edge tech. Open releases of AI fashions profit Meta, Zuckerberg says, as a result of he does not wish to get locked right into a system the place corporations like his must pay a toll to entry AI capabilities, drawing comparisons to “taxes” Apple levies on builders via its App Retailer.

So, about that “open supply” time period. As we first wrote in an update to our Llama 2 launch article a yr in the past, “open supply” has a really specific that means that has traditionally been defined by the Open Supply Initiative. The AI trade has not but settled on terminology for AI mannequin releases that ship both code or weights with restrictions (akin to Llama 3.1) or that ship with out offering coaching knowledge. We have been calling these releases “open weights” as an alternative.

Sadly for terminology sticklers, Zuckerberg has now baked the misguided “open supply” label into the title of his doubtlessly historic aforementioned essay on open AI releases, so preventing for the proper time period in AI could also be a shedding battle. Nonetheless, his utilization annoys individuals like impartial AI researcher Simon Willison, who likes Zuckerberg’s essay in any other case.

“I see Zuck’s outstanding misuse of ‘open supply’ as a small-scale act of cultural vandalism,” Willison instructed Ars Technica. “Open supply ought to have an agreed that means. Abusing the time period weakens that that means which makes the time period much less typically helpful, as a result of if somebody says ‘it is open supply,’ that not tells me something helpful. I’ve to then dig in and determine what they’re really speaking about.”

The Llama 3.1 fashions can be found for obtain via Meta’s own website and on Hugging Face. They each require offering contact data and agreeing to a license and an acceptable use policy, which implies that Meta can technically legally pull the rug out from below your use of Llama 3.1 or its outputs at any time.

[ad_2]

Source link