[ad_1]

Getty Photographs

On Tuesday, Microsoft announced a brand new, freely obtainable light-weight AI language mannequin named Phi-3-mini, which is less complicated and cheaper to function than conventional massive language fashions (LLMs) like OpenAI’s GPT-4 Turbo. Its small measurement is right for working regionally, which might carry an AI mannequin of comparable functionality to the free model of ChatGPT to a smartphone while not having an Web connection to run it.

The AI area usually measures AI language mannequin measurement by parameter depend. Parameters are numerical values in a neural community that decide how the language mannequin processes and generates textual content. They’re discovered throughout coaching on massive datasets and primarily encode the mannequin’s information into quantified kind. Extra parameters usually permit the mannequin to seize extra nuanced and complicated language-generation capabilities but additionally require extra computational sources to coach and run.

A few of the largest language fashions right now, like Google’s PaLM 2, have a whole lot of billions of parameters. OpenAI’s GPT-4 is rumored to have over a trillion parameters however unfold over eight 220-billion parameter fashions in a mixture-of-experts configuration. Each fashions require heavy-duty knowledge middle GPUs (and supporting methods) to run correctly.

In distinction, Microsoft aimed small with Phi-3-mini, which comprises solely 3.8 billion parameters and was skilled on 3.3 trillion tokens. That makes it ultimate to run on shopper GPU or AI-acceleration {hardware} that may be present in smartphones and laptops. It is a follow-up of two earlier small language fashions from Microsoft: Phi-2, launched in December, and Phi-1, launched in June 2023.

Phi-3-mini contains a 4,000-token context window, however Microsoft additionally launched a 128K-token model known as “phi-3-mini-128K.” Microsoft has additionally created 7-billion and 14-billion parameter variations of Phi-3 that it plans to launch later that it claims are “considerably extra succesful” than phi-3-mini.

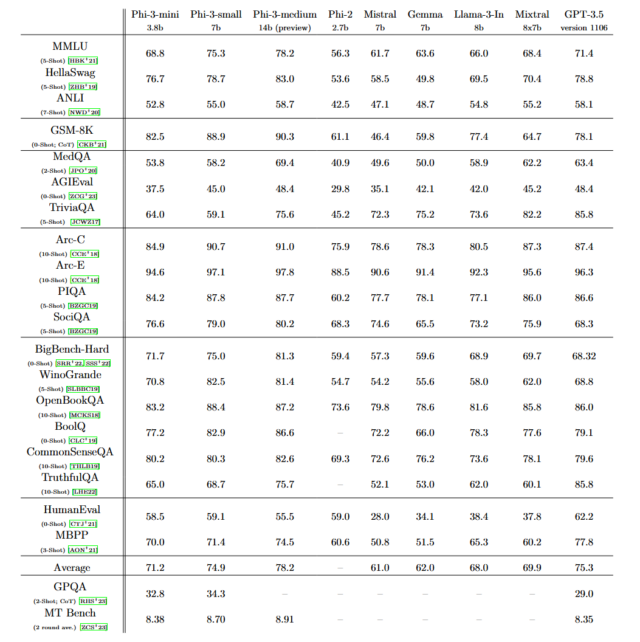

Microsoft says that Phi-3 options total efficiency that “rivals that of fashions comparable to Mixtral 8x7B and GPT-3.5,” as detailed in a paper titled “Phi-3 Technical Report: A Highly Capable Language Model Locally on Your Phone.” Mixtral 8x7B, from French AI firm Mistral, makes use of a mixture-of-experts mannequin, and GPT-3.5 powers the free model of ChatGPT.

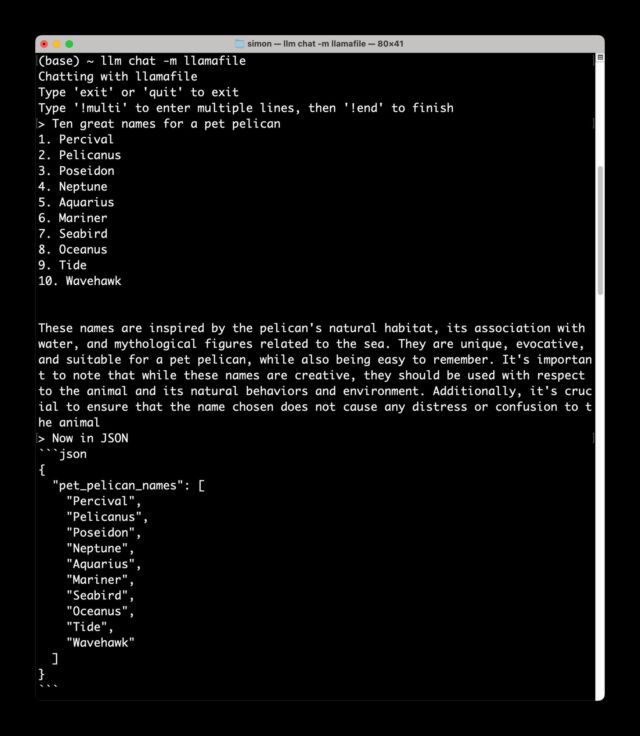

“[Phi-3] appears to be like like it may be a surprisingly good small mannequin if their benchmarks are reflective of what it may truly do,” stated AI researcher Simon Willison in an interview with Ars. Shortly after offering that quote, Willison downloaded Phi-3 to his Macbook laptop computer regionally and stated, “I obtained it working, and it is GOOD” in a textual content message despatched to Ars.

Simon Willison

How did Microsoft cram a functionality much like GPT-3.5, which has no less than 175 billion parameters, into such a small mannequin? Its researchers discovered the reply through the use of fastidiously curated, high-quality coaching knowledge they initially pulled from textbooks. “The innovation lies completely in our dataset for coaching, a scaled-up model of the one used for phi-2, composed of closely filtered internet knowledge and artificial knowledge,” writes Microsoft. “The mannequin can be additional aligned for robustness, security, and chat format.”

A lot has been written concerning the potential environmental impact of AI fashions and datacenters themselves, including on Ars. With new strategies and analysis, it is attainable that machine studying consultants might proceed to extend the potential of smaller AI fashions, changing the necessity for bigger ones—no less than for on a regular basis duties. That will theoretically not solely lower your expenses in the long term but additionally require far much less power in mixture, dramatically lowering AI’s environmental footprint. AI fashions like Phi-3 could also be a step towards that future if the benchmark outcomes maintain as much as scrutiny.

Phi-3 is immediately available on Microsoft’s cloud service platform Azure, in addition to by means of partnerships with machine studying mannequin platform Hugging Face and Ollama, a framework that enables fashions to run regionally on Macs and PCs.

[ad_2]

Source link