[ad_1]

Nvidia / Benj Edwards

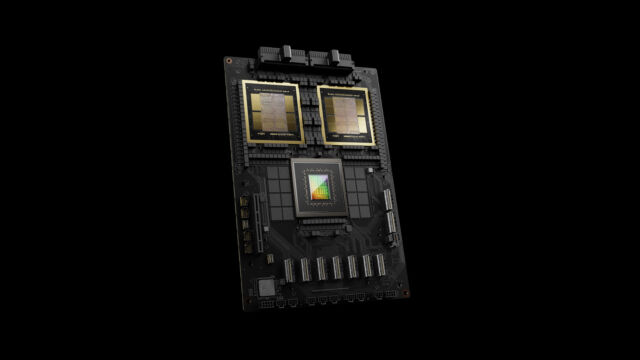

On Monday, Nvidia unveiled the Blackwell B200 tensor core chip—the corporate’s strongest single-chip GPU, with 208 billion transistors—which Nvidia claims can scale back AI inference working prices (resembling working ChatGPT) and power consumption by as much as 25 occasions in comparison with the H100. The corporate additionally unveiled the GB200, a “superchip” that mixes two B200 chips and a Grace CPU for much more efficiency.

The information got here as a part of Nvidia’s annual GTC convention, which is going down this week on the San Jose Conference Middle. Nvidia CEO Jensen Huang delivered the keynote Monday afternoon. “We want larger GPUs,” Huang mentioned throughout his keynote. The Blackwell platform will enable the coaching of trillion-parameter AI fashions that can make immediately’s generative AI fashions look rudimentary as compared, he mentioned. For reference, OpenAI’s GPT-3, launched in 2020, included 175 billion parameters. Parameter rely is a tough indicator of AI mannequin complexity.

Nvidia named the Blackwell structure after David Harold Blackwell, a mathematician who specialised in sport principle and statistics and was the primary Black scholar inducted into the Nationwide Academy of Sciences. The platform introduces six applied sciences for accelerated computing, together with a second-generation Transformer Engine, fifth-generation NVLink, RAS Engine, safe AI capabilities, and a decompression engine for accelerated database queries.

A number of main organizations, resembling Amazon Net Companies, Dell Applied sciences, Google, Meta, Microsoft, OpenAI, Oracle, Tesla, and xAI, are anticipated to undertake the Blackwell platform, and Nvidia’s press release is replete with canned quotes from tech CEOs (key Nvidia clients) like Mark Zuckerberg and Sam Altman praising the platform.

GPUs, as soon as solely designed for gaming acceleration, are particularly nicely fitted to AI duties as a result of their massively parallel structure accelerates the immense variety of matrix multiplication duties essential to run immediately’s neural networks. With the daybreak of recent deep studying architectures within the 2010s, Nvidia discovered itself in an excellent place to capitalize on the AI revolution and commenced designing specialised GPUs only for the duty of accelerating AI fashions.

Nvidia’s knowledge heart focus has made the corporate wildly rich and valuable, and these new chips proceed the development. Nvidia’s gaming GPU income ($2.9 billion within the final quarter) is dwarfed in comparison to knowledge heart income (at $18.4 billion), and that reveals no indicators of stopping.

A beast inside a beast

The aforementioned Grace Blackwell GB200 chip arrives as a key a part of the brand new NVIDIA GB200 NVL72, a multi-node, liquid-cooled knowledge heart laptop system designed particularly for AI coaching and inference duties. It combines 36 GB200s (that is 72 B200 GPUs and 36 Grace CPUs whole), interconnected by fifth-generation NVLink, which hyperlinks chips collectively to multiply efficiency.

“The GB200 NVL72 offers as much as a 30x efficiency enhance in comparison with the identical variety of NVIDIA H100 Tensor Core GPUs for LLM inference workloads and reduces value and power consumption by as much as 25x,” Nvidia mentioned.

That type of speed-up may probably save time and money whereas working immediately’s AI fashions, however it can additionally enable for extra advanced AI fashions to be constructed. Generative AI fashions—like the type that energy Google Gemini and AI image generators—are famously computationally hungry. Shortages of compute energy have extensively been cited as holding again progress and research within the AI discipline, and the seek for extra compute has led to figures like OpenAI CEO Sam Altman trying to broker deals to create new chip foundries.

Whereas Nvidia’s claims in regards to the Blackwell platform’s capabilities are vital, it is price noting that its real-world efficiency and adoption of the expertise stay to be seen as organizations start to implement and make the most of the platform themselves. Rivals like Intel and AMD are additionally trying to seize a bit of Nvidia’s AI pie.

Nvidia says that Blackwell-based merchandise shall be obtainable from varied companions beginning later this 12 months.

[ad_2]

Source link