[ad_1]

Getty Pictures

ChatGPT is leaking non-public conversations that embody login credentials and different private particulars of unrelated customers, screenshots submitted by an Ars reader on Monday indicated.

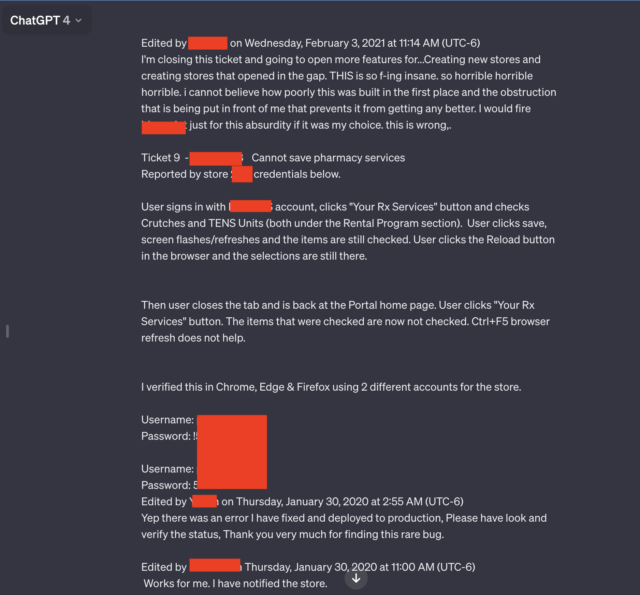

Two of the seven screenshots the reader submitted stood out specifically. Each contained a number of pairs of usernames and passwords that seemed to be linked to a help system utilized by staff of a pharmacy prescription drug portal. An worker utilizing the AI chatbot gave the impression to be troubleshooting issues that encountered whereas utilizing the portal.

“Horrible, horrible, horrible”

“THIS is so f-ing insane, horrible, horrible, horrible, i can not imagine how poorly this was constructed within the first place, and the obstruction that’s being put in entrance of me that forestalls it from getting higher,” the person wrote. “I might fireplace [redacted name of software] only for this absurdity if it was my selection. That is flawed.”

In addition to the candid language and the credentials, the leaked dialog consists of the identify of the app the worker is troubleshooting and the shop quantity the place the issue occurred.

The whole dialog goes properly past what’s proven within the redacted screenshot above. A hyperlink Ars reader Chase Whiteside included confirmed the chat dialog in its entirety. The URL disclosed further credential pairs.

The outcomes appeared Monday morning shortly after reader Whiteside had used ChatGPT for an unrelated question.

“I went to make a question (on this case, assist arising with intelligent names for colours in a palette) and once I returned to entry moments later, I observed the extra conversations,” Whiteside wrote in an electronic mail. “They weren’t there once I used ChatGPT simply final night time (I am a reasonably heavy person). No queries had been made—they only appeared in my historical past, and most definitely aren’t from me (and I do not suppose they’re from the identical person both).”

Different conversations leaked to Whiteside embody the identify of a presentation somebody was engaged on, particulars of an unpublished analysis proposal, and a script utilizing the PHP programming language. The customers for every leaked dialog seemed to be totally different and unrelated to one another. The dialog involving the prescription portal included the 12 months 2020. Dates didn’t seem within the different conversations.

The episode, and others prefer it, underscore the knowledge of stripping out private particulars from queries made to ChatGPT and different AI companies every time doable. Final March, ChatGPT maker OpenAI took the AI chatbot offline after a bug brought about the positioning to show titles from one lively person’s chat historical past to unrelated customers.

In November, researchers revealed a paper reporting how they used queries to immediate ChatGPT into divulging electronic mail addresses, cellphone and fax numbers, bodily addresses, and different non-public knowledge that was included in materials used to coach the ChatGPT giant language mannequin.

Involved about the opportunity of proprietary or non-public knowledge leakage, corporations, including Apple, have restricted their staff’ use of ChatGPT and comparable websites.

As talked about in an article from December when a number of folks discovered that Ubiquity’s UniFy units broadcasted private video belonging to unrelated users, these kinds of experiences are as previous because the Web is. As defined within the article:

The exact root causes of such a system error range from incident to incident, however they typically contain “middlebox” units, which sit between the front- and back-end units. To enhance efficiency, middleboxes cache sure knowledge, together with the credentials of customers who’ve not too long ago logged in. When mismatches happen, credentials for one account will be mapped to a unique account.

An OpenAI consultant stated the corporate was investigating the report.

[ad_2]

Source link