[ad_1]

Efficient compression is about discovering patterns to make knowledge smaller with out shedding data. When an algorithm or mannequin can precisely guess the subsequent piece of information in a sequence, it exhibits it is good at recognizing these patterns. This hyperlinks the concept of constructing good guesses—which is what giant language fashions like GPT-4 do very well—to reaching good compression.

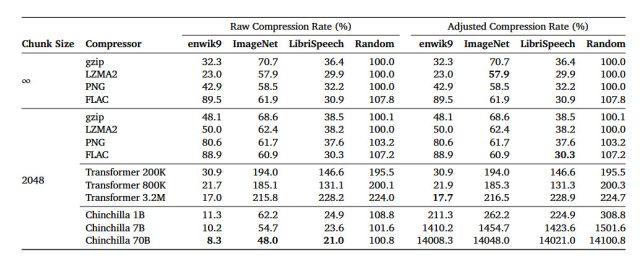

In an arXiv analysis paper titled “Language Modeling Is Compression,” researchers element their discovery that the DeepMind giant language mannequin (LLM) referred to as Chinchilla 70B can carry out lossless compression on picture patches from the ImageNet picture database to 43.4 % of their authentic measurement, beating the PNG algorithm, which compressed the identical knowledge to 58.5 %. For audio, Chinchilla compressed samples from the LibriSpeech audio knowledge set to simply 16.4 % of their uncooked measurement, outdoing FLAC compression at 30.3 %.

On this case, decrease numbers within the outcomes imply extra compression is happening. And lossless compression signifies that no knowledge is misplaced through the compression course of. It stands in distinction to a lossy compression method like JPEG, which sheds some knowledge and reconstructs among the knowledge with approximations through the decoding course of to considerably cut back file sizes.

The research’s outcomes counsel that although Chinchilla 70B was primarily educated to take care of textual content, it is surprisingly efficient at compressing different kinds of knowledge as effectively, typically higher than algorithms particularly designed for these duties. This opens the door for eager about machine studying fashions as not simply instruments for textual content prediction and writing but additionally as efficient methods to shrink the dimensions of varied kinds of knowledge.

DeepMind

Over the previous twenty years, some laptop scientists have proposed that the power to compress knowledge successfully is akin to a form of general intelligence. The concept is rooted within the notion that understanding the world typically includes figuring out patterns and making sense of complexity, which, as talked about above, is much like what good knowledge compression does. By lowering a big set of information right into a smaller, extra manageable kind whereas retaining its important options, a compression algorithm demonstrates a type of understanding or illustration of that knowledge, proponents argue.

The Hutter Prize is an instance that brings this concept of compression as a type of intelligence into focus. Named after Marcus Hutter, a researcher within the discipline of AI and one of many named authors of the DeepMind paper, the prize is awarded to anybody who can most successfully compress a hard and fast set of English textual content. The underlying premise is {that a} extremely environment friendly compression of textual content would require understanding the semantic and syntactic patterns in language, much like how a human understands it.

So theoretically, if a machine can compress this knowledge extraordinarily effectively, it’d point out a type of common intelligence—or a minimum of a step in that course. Whereas not everybody within the discipline agrees that profitable the Hutter Prize would point out common intelligence, the competitors highlights the overlap between the challenges of information compression and the objectives of making extra clever programs.

Alongside these strains, the DeepMind researchers declare that the connection between prediction and compression is not a one-way road. They posit that when you have a very good compression algorithm like gzip, you may flip it round and use it to generate new, authentic knowledge primarily based on what it has realized through the compression course of.

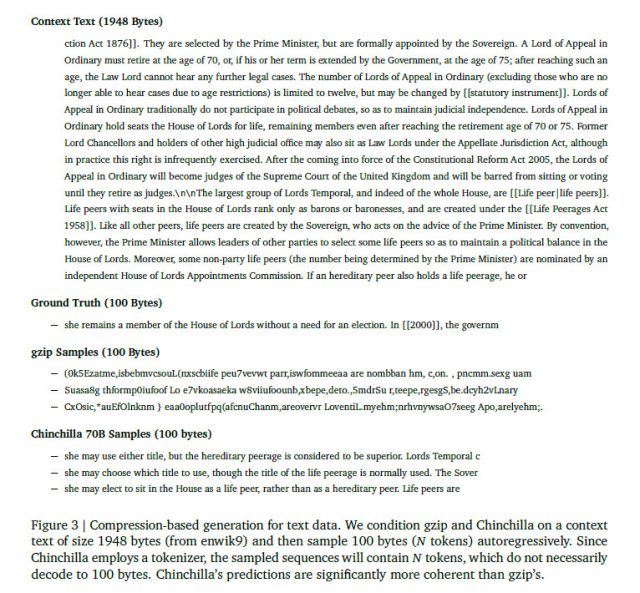

In a single part of the paper (Part 3.4), the researchers carried out an experiment to generate new knowledge throughout completely different codecs—textual content, picture, and audio—by getting gzip and Chinchilla to foretell what comes subsequent in a sequence of information after conditioning on a pattern. Understandably, gzip did not do very effectively, producing utterly nonsensical output—to a human thoughts, a minimum of. It demonstrates that whereas gzip will be compelled to generate knowledge, that knowledge may not be very helpful aside from as an experimental curiosity. Then again, Chinchilla, which is designed with language processing in thoughts, predictably carried out much better within the generative job.

DeepMind

Whereas the DeepMind paper on AI language mannequin compression has not been peer-reviewed, it gives an intriguing window into potential new functions for big language fashions. The connection between compression and intelligence is a matter of ongoing debate and analysis, so we’ll possible see extra papers on the subject emerge quickly.

[ad_2]

Source link