[ad_1]

On the earth of deep-learning AI, the traditional board recreation Go looms massive. Till 2016, the perfect human Go participant may nonetheless defeat the strongest Go-playing AI. That modified with DeepMind’s AlphaGo, which used deep-learning neural networks to show itself the sport at a stage people can not match. Extra not too long ago, KataGo has change into in style as an open supply Go-playing AI that can beat top-ranking human Go gamers.

Final week, a gaggle of AI researchers revealed a paper outlining a way to defeat KataGo through the use of adversarial methods that make the most of KataGo’s blind spots. By enjoying sudden strikes exterior of KataGo’s coaching set, a a lot weaker adversarial Go-playing program (that beginner people can defeat) can trick KataGo into shedding.

To wrap our minds round this achievement and its implications, we spoke to one of many paper’s co-authors, Adam Gleave, a Ph.D. candidate at UC Berkeley. Gleave (together with co-authors Tony Wang, Nora Belrose, Tom Tseng, Joseph Miller, Michael D. Dennis, Yawen Duan, Viktor Pogrebniak, Sergey Levine, and Stuart Russell) developed what AI researchers name an “adversarial policy.” On this case, the researchers’ coverage makes use of a mix of a neural community and a tree-search technique (known as Monte-Carlo Tree Search) to seek out Go strikes.

KataGo’s world-class AI discovered Go by enjoying thousands and thousands of video games in opposition to itself. However that also is not sufficient expertise to cowl each potential state of affairs, which leaves room for vulnerabilities from sudden habits. “KataGo generalizes nicely to many novel methods, nevertheless it does get weaker the additional away it will get from the video games it noticed throughout coaching,” says Gleave. “Our adversary has found one such ‘off-distribution’ technique that KataGo is especially susceptible to, however there are possible many others.”

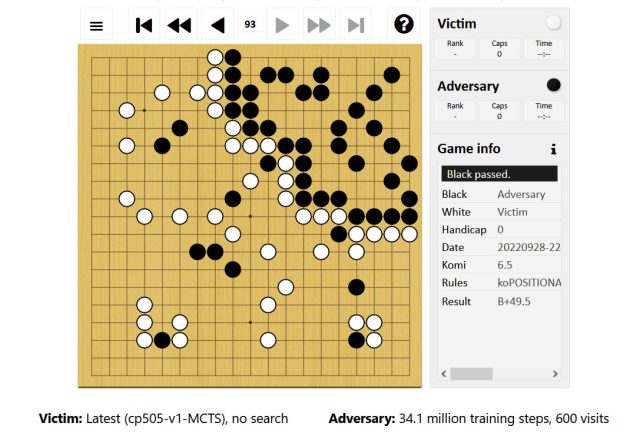

Gleave explains that, throughout a Go match, the adversarial coverage works by first staking declare to a small nook of the board. He offered a link to an example during which the adversary, controlling the black stones, performs largely within the top-right of the board. The adversary permits KataGo (enjoying white) to put declare to the remainder of the board, whereas the adversary performs a couple of easy-to-capture stones in that territory.

Adam Gleave

“This methods KataGo into considering it is already gained,” Gleave says, “since its territory (bottom-left) is far bigger than the adversary’s. However the bottom-left territory would not truly contribute to its rating (solely the white stones it has performed) due to the presence of black stones there, that means it isn’t absolutely secured.”

On account of its overconfidence in a win—assuming it would win if the sport ends and the factors are tallied—KataGo performs a go transfer, permitting the adversary to deliberately go as nicely, ending the sport. (Two consecutive passes finish the sport in Go.) After that, some extent tally begins. Because the paper explains, “The adversary will get factors for its nook territory (devoid of sufferer stones) whereas the sufferer [KataGo] doesn’t obtain factors for its unsecured territory due to the presence of the adversary’s stones.”

Regardless of this intelligent trickery, the adversarial coverage alone will not be that nice at Go. In actual fact, human amateurs can defeat it comparatively simply. As an alternative, the adversary’s sole goal is to assault an unanticipated vulnerability of KataGo. The same state of affairs may very well be the case in virtually any deep-learning AI system, which supplies this work a lot broader implications.

“The analysis reveals that AI methods that appear to carry out at a human stage are sometimes doing so in a really alien method, and so can fail in methods which are shocking to people,” explains Gleave. “This result’s entertaining in Go, however related failures in safety-critical methods may very well be harmful.”

Think about a self-driving automotive AI that encounters a wildly unlikely state of affairs it would not anticipate, permitting a human to trick it into performing harmful behaviors, for instance. “[This research] underscores the necessity for higher automated testing of AI methods to seek out worst-case failure modes,” says Gleave, “not simply take a look at average-case efficiency.”

A half-decade after AI lastly triumphed over the perfect human Go gamers, the traditional recreation continues its influential function in machine studying. Insights into the weaknesses of Go-playing AI, as soon as broadly utilized, might even find yourself saving lives.

[ad_2]

Source link